Cut the watching, not the proof.

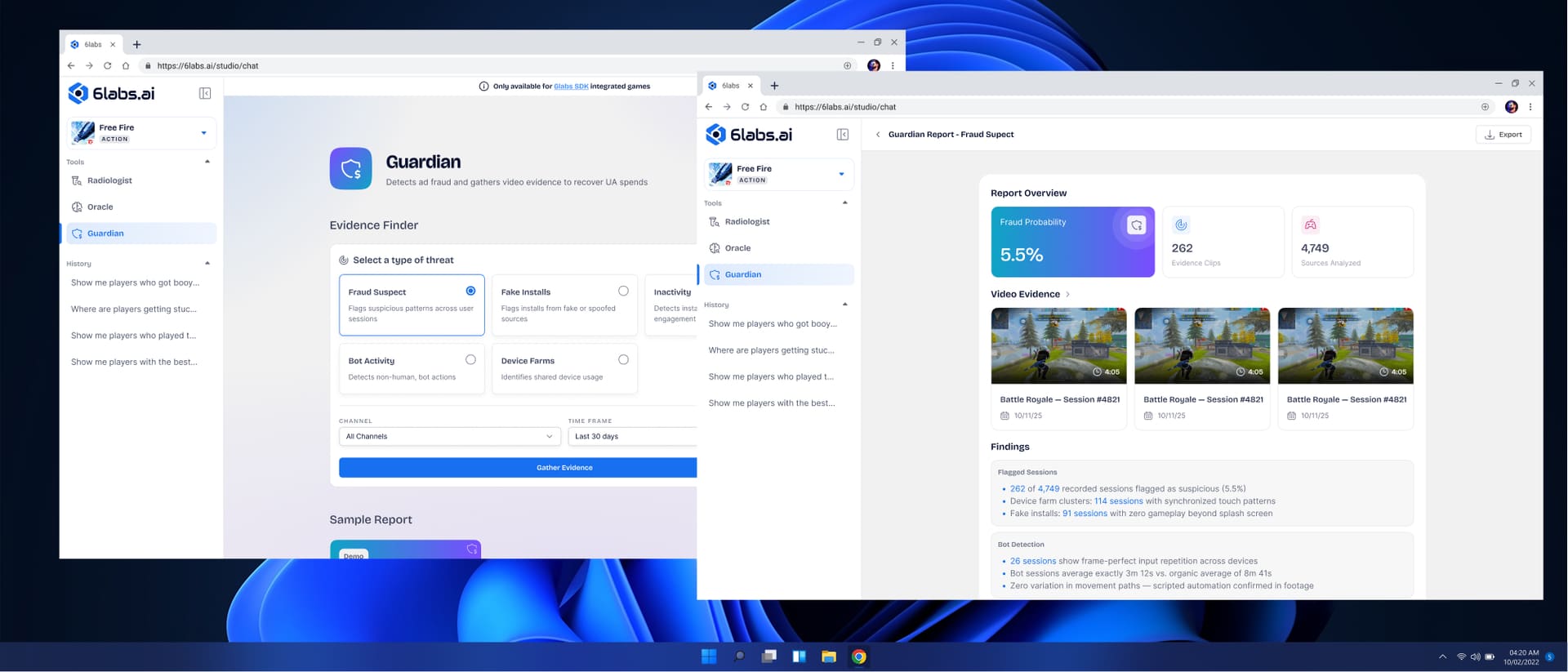

Studios were burning real money to understand their own players. They'd commission playtests, then assign people to watch the recordings to find the moments that mattered. The cost was paid twice — once to run the playtest, again to extract value from it manually.

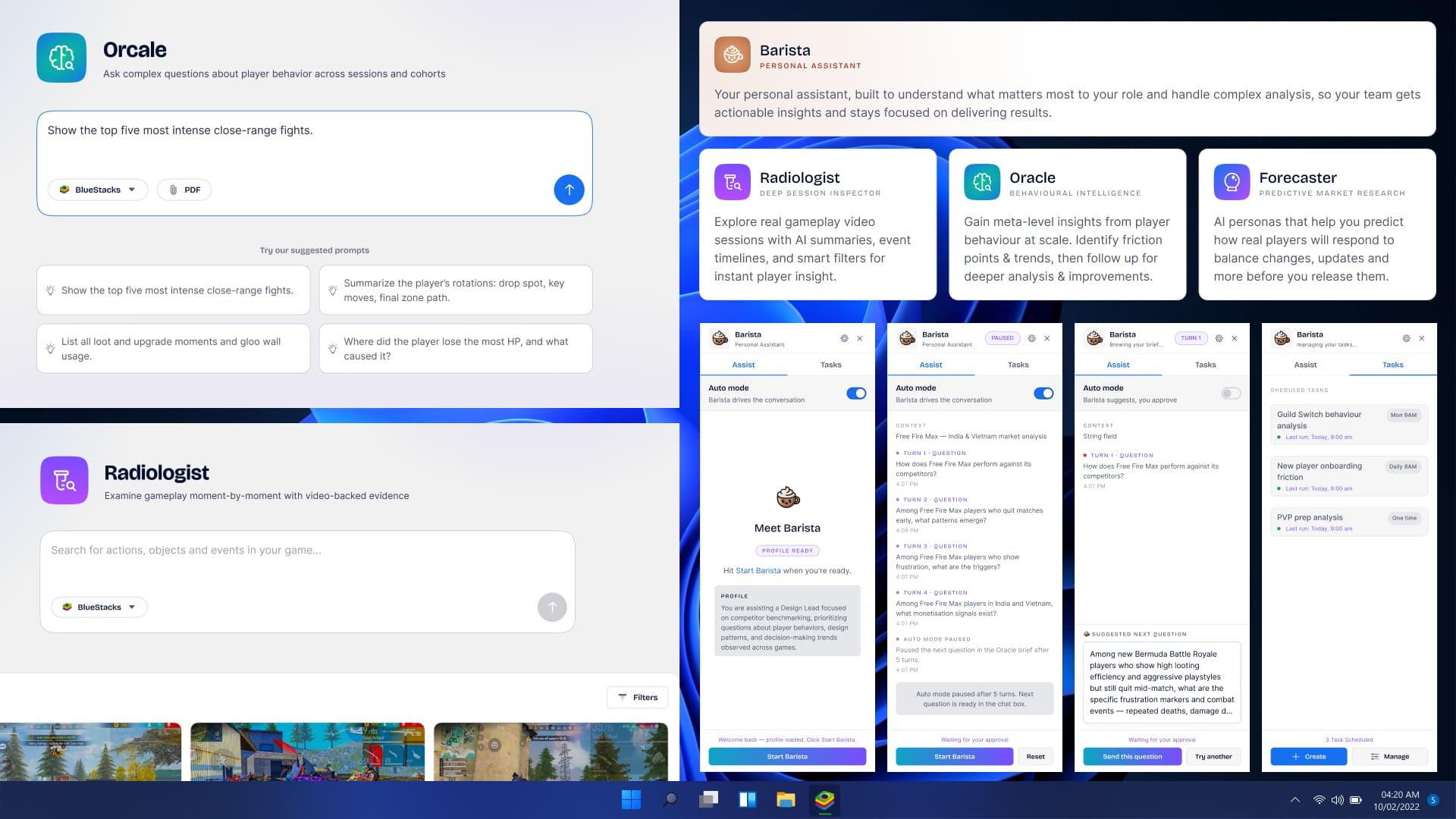

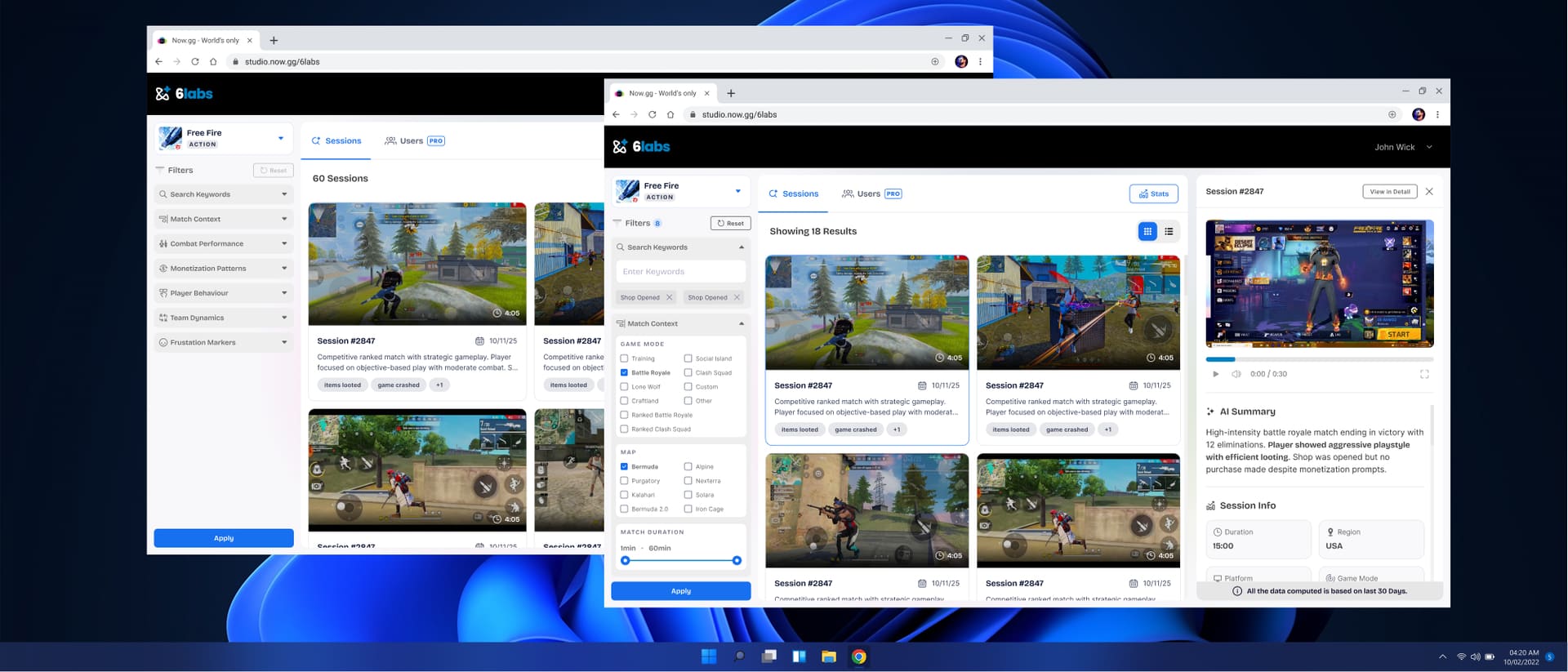

An AI auto-tagged every video in our corpus across player-behaviour signatures. Devs filtered by those tags to surface the exact sessions they wanted, instead of trawling through every session. Limited dev resources, narrow scope.

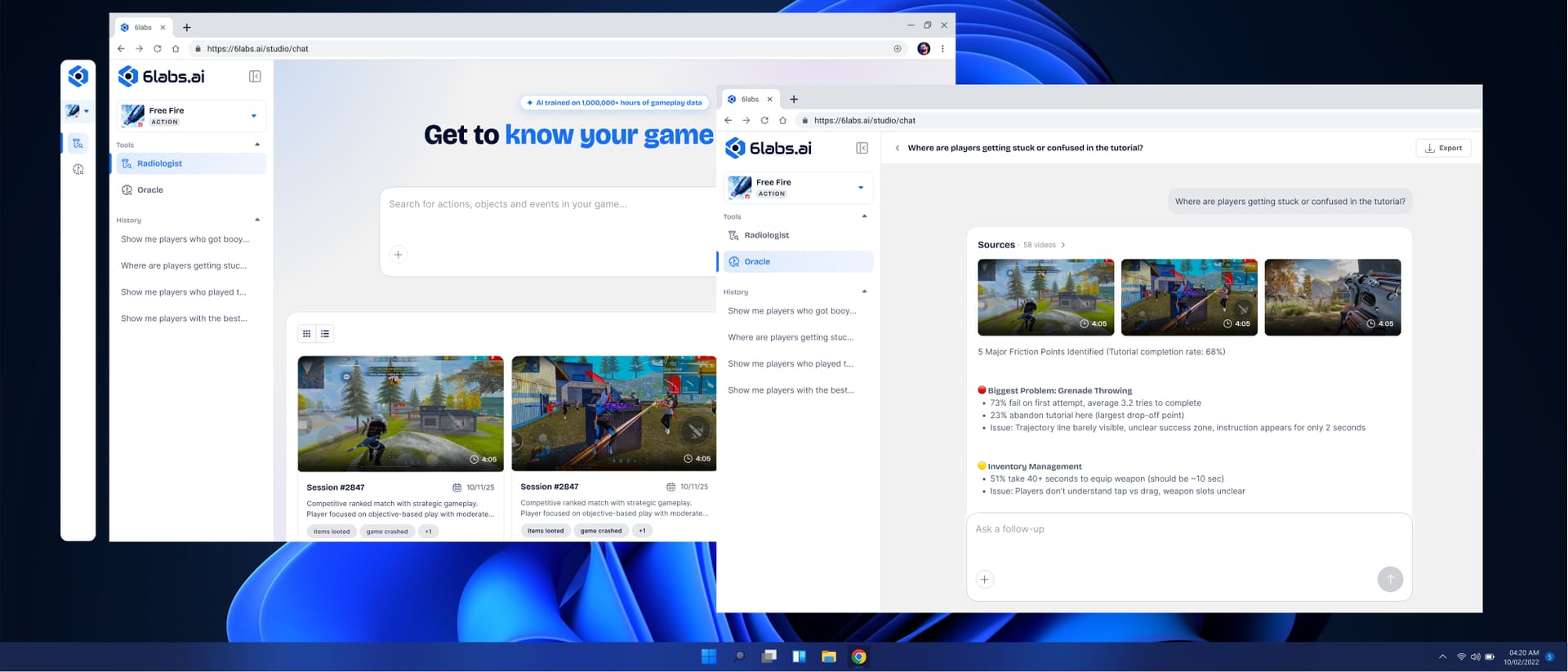

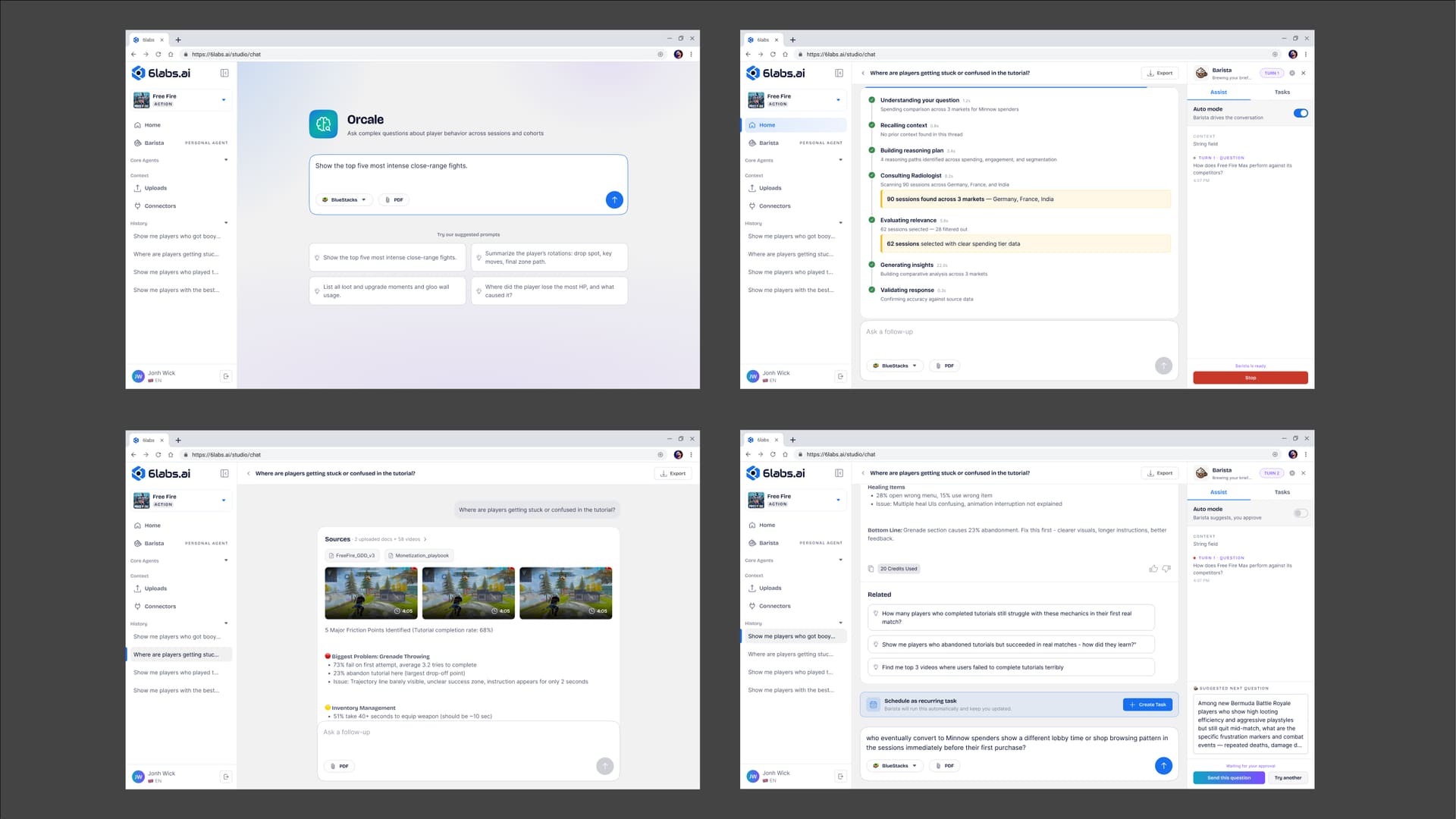

Devs liked it — already faster than the playtest-then-watch loop. Feedback was consistent: “go further. We don’t want to watch better videos. We want the answer, with the videos as proof.”

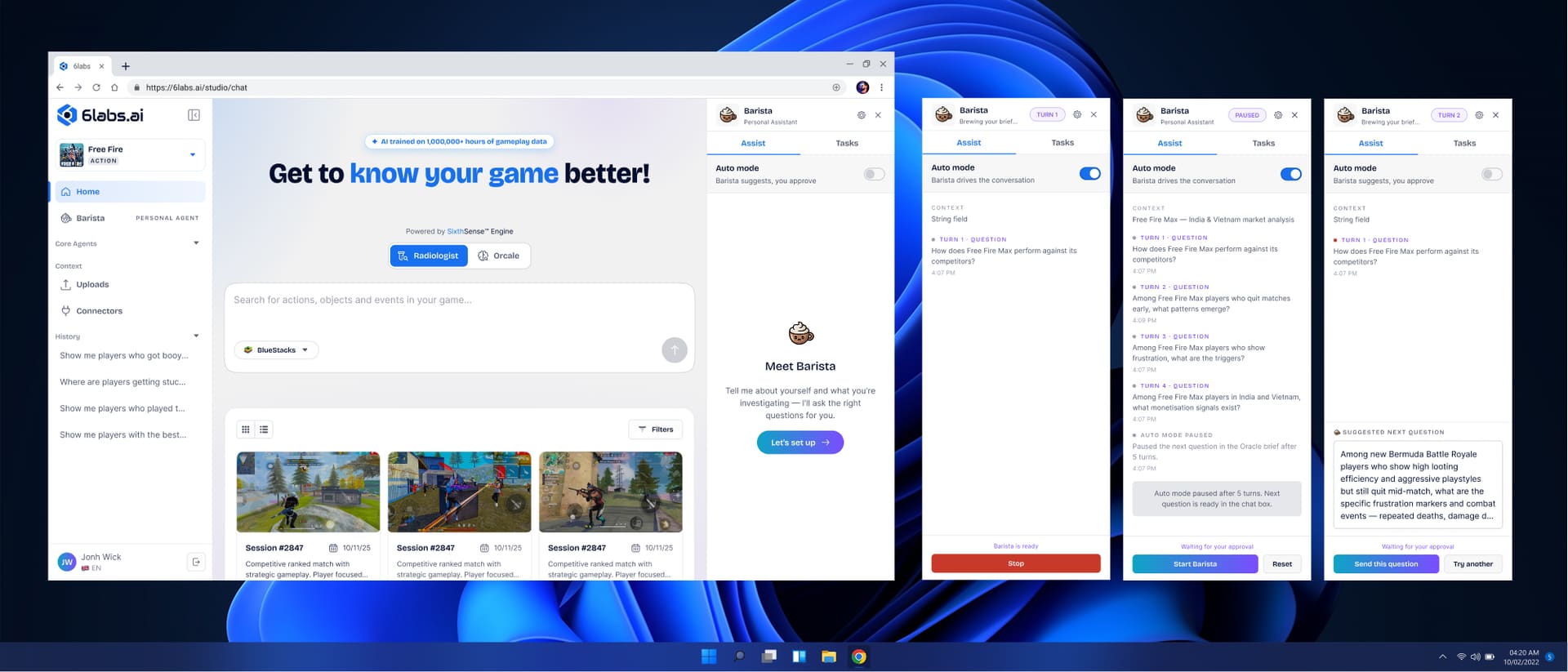

Expand scope. Move from filtered playback to generated insights, with video as evidence underneath.